Product Page Optimization: What High-Performing eCommerce Brands Do Differently

Insights in this post come from our CRO team's decade of experience working with eCommerce brands. Edited by our in-house content team.

Insights in this post come from our CRO team's decade of experience working with eCommerce brands. Edited by our in-house content team.

Most product page optimisation advice has the same problem: it was true for someone else, once, under conditions that no longer exist and probably didn't apply to you in the first place.

A brand ran a test. They changed their button colour. Conversions went up 21%. They published a case study. You read it, changed your button colour, and heard nothing but the faint sound of your own expectations deflating.

This is how eCommerce best practices travel stripped of context, lagging by months, and, hopefully, applied to stores with different products, different customers, and fundamentally different problems. The brands pulling 6–8%+ conversion rates, well above the industry average of 2–4%, didn't get there by reading the same blogs as everyone else.

They got there by running disciplined product-page CRO experiments on their own customers, honestly reading the results, and resisting the urge to stop before the data was actually ready.

Most eCommerce brands test elements on their product pages. High-performing brands test decisions in the micro-moments where a shopper moves from interested to committed, or quietly closes the tab.

The difference is subtle, but it's where most of the lift in eCommerce conversion rates actually lives. Below is how advanced teams approach product page optimisation and precisely where they diverge from received wisdom.

This post covers:

1. Product Copy: Most Brands Test Words. Advanced Brands Test Persuasion Structure

3. Social Proof: Most Brands Have Reviews. Most Shoppers Never See Them

5. Pricing Presentation: Most Brands Show a Price. Advanced Brands Test What That Price Signals

6. Urgency: Most Brands Create Pressure. Advanced Brands Test Whether It's Believed

7. Cross-Selling: Most Brands Add More. Advanced Brands Test: When Less Converts Better

The standard approach to copy testing is to swap a headline or try a punchier opening. Fine. Most brands test headlines. Advanced brands test the order of persuasion.

But the more interesting question, the one advanced brands are actually asking, is whether the structure of the argument matters as much as the words inside it. It does. Possibly more.

a. Test benefit-led vs. problem-led openings: The core question is whether to lead with what the product does or the frustration it solves. Problem-led copy tends to win for anything addressing a clear pain point: skincare, fitness gear, sleep, storage. And that’s what we’ve found when we test product pages for eCommerce companies.

b. Test copy sequence, not just copy content: Most brands test what they say but never the order they say it in. Does the shopper need to understand the problem before the product makes sense? Nielsen Norman Group's research on product descriptions is instructive here: shoppers skim, read the first sentence more carefully than the rest, and abandon quickly when what they find doesn't connect. The opening sentence is doing enormous work. What comes second matters almost as much.

c. Test structured naming vs. branded naming: Clever product names are satisfying to write. Descriptive ones, material plus product type tend to convert better on category pages and in search. Our client A/B tests show that structured product presentation drives significant growth for brands that apply it uniformly. It's not glamorous. It works.

The add-to-cart button is among the most-tested elements on any product page, and yet Baymard Institute's benchmark of 120+ leading eCommerce sites finds that more than half still have mediocre or worse UX in their buy section. The button, in other words, is not the solved problem it appears to be.

a. Test button copy with reassurance vs. ownership language: "Add to Bag Free Returns" outperforms plain "Add to Cart" on higher-ticket items because it addresses risk at the exact moment the shopper is weighing it.

For impulse categories, ownership language ("Get Yours") tends to outperform service language. CTA optimization tests commonly produce 5–15% conversion improvements, and the impact is largest when the current copy uses passive language that doesn't encourage action.

b. Test sticky vs. static add-to-cart on mobile: Our tests show that a sticky add-to-cart drawer, where the buy controls slide up from the bottom of the page, produced a significant increase in orders. Not every variation of "sticky" performs: a button that merely scrolls the user back to the existing buy section shows little difference. The format matters, not just the concept.

We ran this test for one of our clients: Hubman and Chubgirl, a niche eCommerce brand in art supplies and stationery. Keeping the ‘Add to cart’ CTA visible reduced scrolling friction and made the action available when intent was highest. This increased orders by 66% and reduced abandonment by 7.89% post-experiment. You can read the full case study here.

c. Test button colour contrast as an isolated variable: It's tempting to dismiss colour as superficial. The data disagrees. One of our CRO audits found that differentiating the add-to-cart button from surrounding CTAs, which had all been styled identically, produced a 20% increase in conversion rate. The problem wasn't the colour. It was that shoppers couldn't immediately identify which button to press.

Most brands have reviews. Most shoppers never see them because they're buried below the fold, where the hesitant shopper, the one who most needs reassurance, has already left.

But the question of where they live on the page and which ones get featured is where the most impactful eCommerce product page CRO happens.

93% of consumers say online reviews influence their purchase decisions, and yet most brands leave them buried below the fold, where the hesitant shopper, the one who most needs reassurance, has already left.

a. Test review placement below-fold module vs. near the CTA: PowerReviews' research shows that products with 11–30 reviews convert approximately 68% higher than those with zero. But that lift only materialises if shoppers actually see the reviews. Surfacing a review snippet directly beneath the product title or in the notification bar, as Drunk Elephant does, removes the need to scroll in search of reassurance at all.

b. Test objection-resolving hero reviews vs. general praise: A review that resolves a specific objection ("I was worried it would run small, it doesn't") does more work than five stars and "Love this product!" Our audits consistently flag this: most customers believe reviews older than three months aren't relevant, and reviews that address real, current concerns convert better than timeless, vague praise.

c. Test targeted review prompts vs. open-ended asks: The quality of reviews you receive is directly related to the quality of prompts you send. "Was it true to size? Did it work for sensitive skin?" generates answers future shoppers can actually use.

Open-ended prompts generate "great product, fast delivery" information that reassures almost no one. 63% of consumers say testimonials featuring real, specific customer experiences are more credible than anonymous quotes. Engineer for specificity from the start.

Baymard's research on product page usability found that the average eCommerce site has 24 structural usability issues on its product pages, and imagery is a consistent source of friction.

a. Test UGC vs. professional photography by category: 77% of shoppers say they'd rather see customer photos than professional shots before buying. For fashion and home goods, UGC tends to build more trust; it's imperfect, as real life is, which is oddly reassuring. For technical or functional products, professional imagery with specific callouts tends to hold its ground. The category determines the winner.

b. Test video presence vs. static-only pages: 74% of shoppers who watch an explainer video subsequently buy the product. Whether that reflects video quality or the elevated intent of someone willing to watch a two-minute product demonstration is an open question, but the ceiling for video on considered-purchase pages is higher than most brands assume.

The test is worth running, with load speed tracked as a co-variable.

At Convertcart, we ran this test for one of our clients in the electronics and appliances space. Showcasing a “How it works” video helped users understand the process better and boosted conversions by 4%. You can read the full case study here.

c. Test hover-to-zoom selectively, not globally: Hover-to-zoom earns its place on products that reward close inspection of textured fabrics, fine hardware, intricate stitching. 40% of eCommerce stores don't support pinch or tap gestures for product images on mobile, which is a separate and equally expensive failure.

Charm pricing ($99 vs $100) still works. But it's table stakes, and the more interesting questions are elsewhere. More than 18% of eCommerce stores have poor discount visibility on product pages, leading to shopper confusion at precisely the moment a decision is forming.

a. Test crossed-out price vs. percentage saving: The crossed-out original price works better when the original is familiar or widely advertised. The percentage wins when the saving is large and instantly legible. 80% of shoppers say they feel more encouraged to make a first-time purchase when they find an offer, but the format of that offer shapes whether they believe it.

b. Test BNPL placement and framing: Showing "4 payments of $24.75" can meaningfully lift conversion for mid-range products by making the price feel manageable. It can also, depending on placement and phrasing, signal that the product is at the outer edge of what the shopper intended to spend.

Our recent tests showed that making an existing returns policy visible on product pages led to lifts. Pricing and reassurance are often the same test in disguise.

c. Test membership pricing proximity to the CTA: Surfacing loyalty pricing ("Members pay $79") near the main CTA can reframe the purchase: instead of buying a $90 product, the shopper is considering a $72 product and a membership with ongoing benefits. Stores with personalised pricing strategies see up to 35% revenue increases.

Done clumsily, this introduces a decision the shopper wasn't ready to make. Test the placement before assuming either outcome.

The question isn't whether urgency works. It's whether your shoppers believe yours: a CXL/Speero case study on the UK pet food brand Bob & Lush found that adding a time-sensitive shipping message, "Free next business day delivery if you order before 4 PM," produced a 27.1% revenue uplift over a six-week test. The message was real, placed where every interested shopper would see it, and addressed a genuine customer concern.

a. Test specific scarcity vs. vague urgency language: "Only 3 left in your size" is believable and actionable. "Selling fast!" is background noise. The former works because it's specific and plausible; the latter has been deployed so indiscriminately that a growing segment of shoppers now reads it as decoration.

b. Test honest urgency against a retention baseline: Urgency can lift a conversion today while degrading your customer base over time. Shoppers acquired through manufactured pressure show lower repurchase rates and higher returns.

Advanced brands now track both the immediate conversion lift and 90-day retention. The results of that second measurement are sometimes so uncomfortable that they prompt a complete change in strategy.

c. Test replenishment framing convenience vs. commitment: For consumables, "Never run out" consistently outperforms "Subscribe and save" not because the saving is irrelevant, but because convenience is a lower-friction motivation than financial planning.

67% of B2B eCommerce professionals rate back-in-stock alerts as their top conversion strategy. The principle extends to subscription framing: frame around the problem it solves, not the mechanism.

The instinct is to add more. More recommendations, more bundles, more options. The data consistently argues the opposite. Choice paralysis is real, and product pages are not the place to test its limits. Advanced brands have largely converged on four recommendations, as the ceiling is high enough to surface relevant options but not so high that the primary decision becomes harder.

a. Test mini-cart cross-sell vs. below-fold recommendations module: The moment immediately after a shopper clicks "Add to Cart" is prime real estate that most brands underuse. A single well-chosen complement at a meaningfully lower price point converts at a higher rate than a full recommendations module below the fold.

CXL's research suggests the upsell offer should be at least 60% cheaper than the product; anything priced closer triggers comparison thinking, which is the enemy of an easy yes.

b. Test removing social sharing buttons: It sounds counterintuitive, but a test on Finnish hardware retailer Taloon.com, documented by CXL, found that removing social sharing buttons from product pages lifted add-to-cart conversions by 11.9%.

The shares on most product pages were zero, which, rather than being neutral, was functioning as negative social proof. The product page has one job.

Every element that pulls attention away from that job costs something.

c. Test personalised vs. generic recommendations for returning visitors: Stores with personalised recommendations see up to 4.5x higher conversion rates compared to stores without them.

Generic "you might also like" modules are largely wasted on returning shoppers who have already told you, through their behaviour, exactly what they're interested in. The data needs to be clean for this to work, which is where most brands quietly fail.

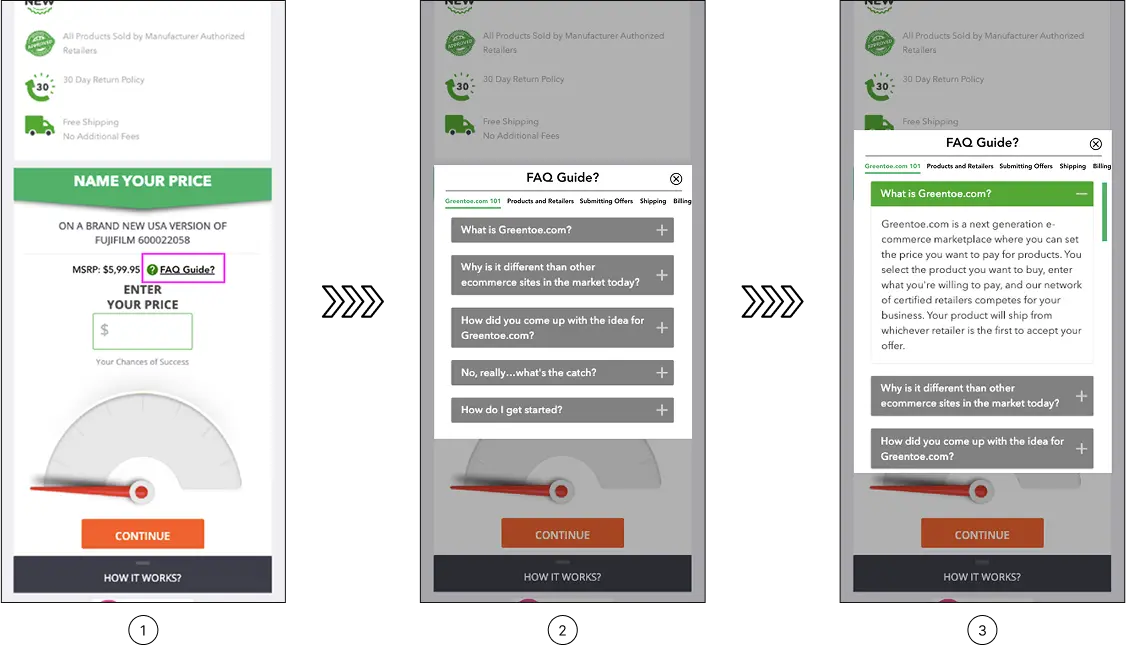

53% of US online customers will abandon a purchase if they can't quickly find an answer to a question. The brands handling this well don't make shoppers hunt. They put the answer exactly where the doubt arises.

a. Test FAQ microcopy near the CTA vs. a collapsed bottom-page section: FAQs buried after the reviews, the cross-sells, and the brand story are not available when the shopper needs them. The same content, surfaced as a small, precise link near the CTA "Will this work for sensitive skin?" positioned right above the buy button, drives measurably more engagement. It's a placement decision, not a redesign.

b. Test proactive vs. reactive live chat triggers: Proactive triggers a chat prompt opening after 90 seconds of inactivity outperform passive chat widgets for considered purchases. 88% of users never return to a site after a bad experience; catching a confused shopper mid-page with the right prompt is considerably cheaper than losing them entirely.

c. Test recovery-focused 404 pages vs. generic apology pages: 404 pages that surface relevant product alternatives recover a real percentage of otherwise-lost traffic.

Brands that treat their 404 page as a dead end are leaving a recovery mechanism unused. It costs almost nothing to build, and unlike most optimisations, has no meaningful downside case.

Here is the uncomfortable mathematics of A/B testing: according to industry research cited by VWO, only 1 in 7 tests produces a statistically significant result.

That sounds bleak until you consider what the same research found: brands with structured, organised testing programmes see that figure climb to roughly 1 in 3.

The methodology, not the ideas, is where the leverage lives.

a. Prioritise tests before running them: Score each experiment on three dimensions: likely impact, confidence in the hypothesis, and ease of implementation. High-scoring tests run first. Low-scoring tests are dropped.

The alternative running tests in the order they occur to you is how brands stay busy without getting faster.

b. Run tests long enough to trust the results: Remember that a week of data is almost never enough for anything other than extremely high-traffic pages. Three to four weeks is a more honest minimum.

Most brands stop early because a promising result creates pressure to ship the winner before it has earned that status.

c. Read results by segment, not just in aggregate: A variation that "lost" overall may have won decisively among new visitors, mobile users, or shoppers above a certain order value.

That is itself a finding. The brands mining their results this way find more value in their losing tests than most teams find in their winners.

A failed test, properly examined, is an answer to a question you asked. That is nothing.

The advantage isn't in knowing what to test. It's in knowing why shoppers hesitate and designing every experiment around that hesitation. Product pages aren't a problem you solve once.

They're a standing conversation with your customers, one that shifts as your catalogue evolves and your audience changes. The brands winning aren't the ones that found the right answers. They're the ones who kept asking sharper questions.

The gap between a 3% conversion rate and a 6% one almost never comes down to a single fix. It comes down to knowing where product page friction lives and which CRO tests will move the needle fastest.

A ConvertCart audit looks at your product pages the way your most hesitant customer does. We identify exactly where shoppers are losing confidence, stalling, or leaving, and build a testing roadmap focused on what's most likely to move your numbers in your category for your audience.

Related Reading:

The Mobile Product Page Audit: A Checklist for eCommerce Teams

Why Your eCommerce Store Isn’t Converting (Even With Traffic)